- Generative AI Art

- Posts

- Krea's new model drops the AI look

Krea's new model drops the AI look

PLUS: Google's Gemini Omni video editor surfaces ahead of I/O, and Thinking Machines Lab previews real-time AI

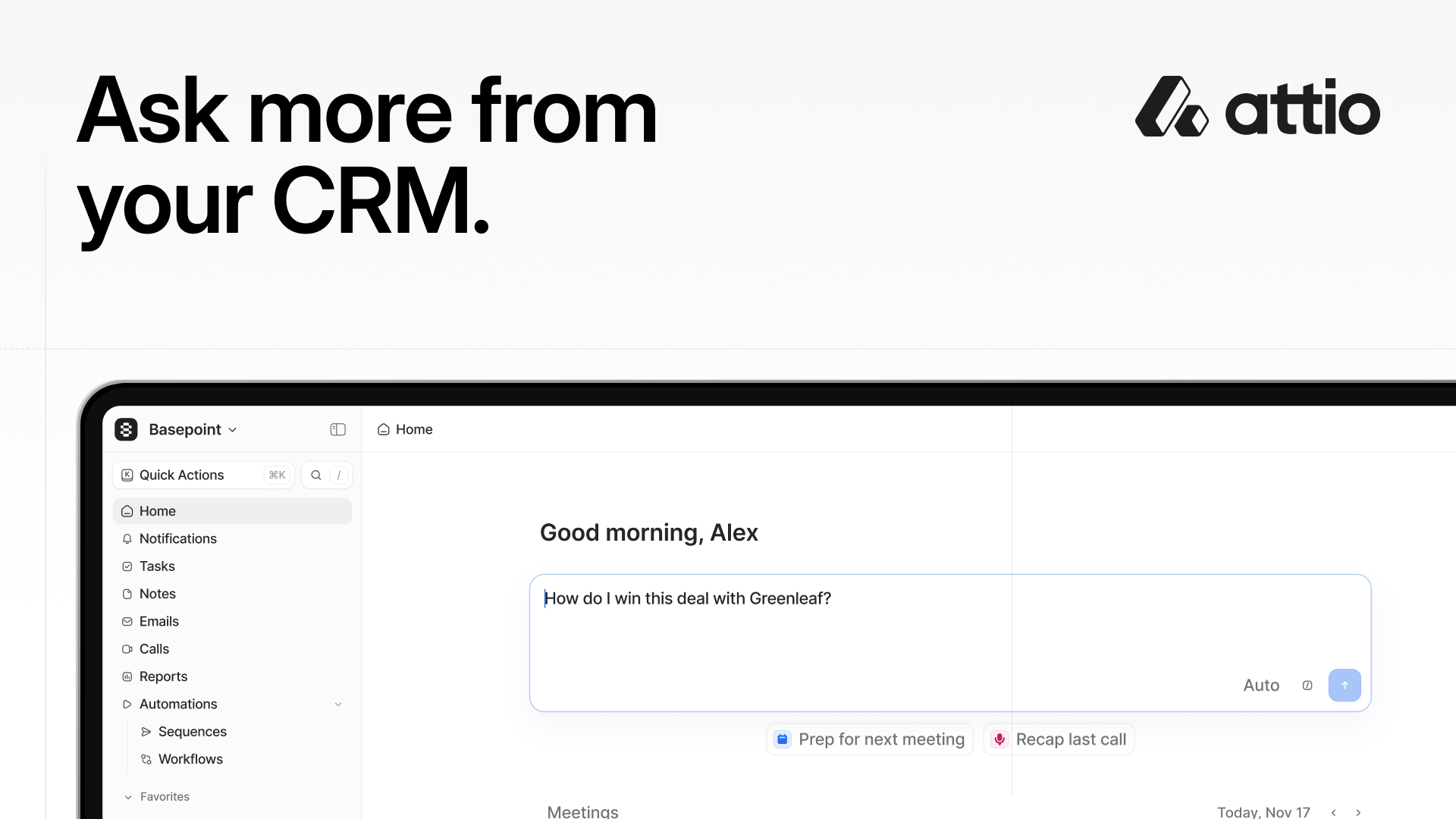

Attio is the AI CRM for high-growth teams.

Connect your email, calls, product data and more, and Attio instantly builds your CRM with enriched data and complete context. Whether you’re running product-led growth or enterprise sales, Attio adapts to your unique GTM motion.

Then Ask Attio to plan your next move.

Run deep web research on prospects. Update your pipeline as you work. Find customers and draft outreach emails. Powered by Universal Context, Attio's intelligence layer, Attio searches, updates, and creates across your data to accelerate your workflow.

Ask more from your CRM.

Krea just launched its first foundation image model built entirely from scratch — and the whole design philosophy is a direct rejection of the polished, same-ish output that most AI image generators produce. K2 uses moodboards and style references to give artists real control over aesthetic direction.

Will models built around creative flexibility rather than photorealistic accuracy become the new standard for art-focused AI tools? Krea is betting they will — and it’s building everything in-house to prove it.

Today in AI:

Krea’s K2 launches with moodboards and style control

Google’s Gemini Omni video editor surfaces ahead of I/O

Thinking Machines Lab opens a real-time AI interaction preview

What’s new? Krea launched K2, its first image foundation model built entirely from scratch, designed to prioritize aesthetic diversity and creative control — specifically targeting the over-polished, homogenous output that defines most AI image generators.

What matters?

K2’s standout feature is moodboards: users combine multiple reference images to blend their styles into a single generation, giving artists more layered control than single-reference or text-prompt approaches allow.

The model generates images in under 15 seconds and treats style as an active tool — letting creators guide, mix, strengthen, or reduce visual directions using style references rather than vague prompt words.

Krea built K2 against the “AI look” by design — the team describes the goal as making AI work as “an actual creative medium. Something that feels raw, flexible, unopinionated, and unconstrained.”

Why it matters?

The AI image space has converged around a recognizable aesthetic — smooth, polished, and increasingly predictable. K2’s philosophy of raw, unconstrained generation could give creative professionals a genuinely flexible alternative they can actually shape.

GUIDE

What’s new? Google’s upcoming Gemini Omni video model appeared in early testing this week, showing a system that can remix existing videos, edit footage directly inside Gemini chat, and generate clips from ready-made templates — ahead of its expected official debut at Google I/O on May 19-20.

What matters?

Unlike Google’s previous video tools, Gemini Omni is built on a unified multimodal backbone, handling text, image, video, and audio in one system rather than stitching separate models together.

The model’s early demos showed strong in-chat editing: removing watermarks, swapping objects within clips, and rewriting scenes using natural language prompts.

Current generation clips run roughly 10 seconds, with scene-extension updates planned for creators who need longer takes.

Why it matters?

Editing video directly inside a chat interface removes a major workflow jump for creators who currently bounce between generation and editing tools. If Gemini Omni delivers at its official launch, it puts Google in direct competition with dedicated video tools like Runway and Pika — from inside the Gemini interface they already use.

SPONSORED BY MINTLIFY

Are you tracking agent views on your docs?

AI agents already outnumber human visitors to your docs — now you can track them.

What’s new? Mira Murati’s Thinking Machines Lab opened a limited research preview of its interaction models — a new approach to AI that processes audio, video, and text simultaneously in 200-millisecond micro-turns, replacing traditional turn-based conversation with continuous real-time collaboration.

What matters?

The system responds in an average of 0.4 seconds — nearly 3x faster than GPT-Realtime-2’s 1.18-second average — because it processes input and output at the same time rather than waiting for a user to finish speaking.

The architecture uses two models: a live interaction model that stays engaged with the user, and a background reasoning model that handles slower tasks asynchronously without interrupting the conversation.

Semafor reports the model can react to visual cues, count repetitions, and initiate responses at scheduled moments — behaviors that require continuous observation, not turn-based listening.

Why it matters?

Murati’s core argument is that today’s interactive AI is a turn-based language model with speech detection bolted on — a workaround, not a native design. Real-time AI that can watch, listen, and respond simultaneously could reshape how creators collaborate with AI in live, improvised workflows.

Everything else in AI

Google unveiled Googlebook, a new AI laptop category built around Gemini Intelligence with a “Magic Pointer” cursor that surfaces contextual AI suggestions whenever you hover over anything on screen.

Isomorphic Labs raised $2.1 billion in Series B funding — one of the largest private rounds in AI history — led by Thrive Capital and backed by Alphabet, to scale its AI drug design engine.

Fans are making AI-generated videos that insert themselves into live sports broadcasts, with a Korean baseball clip racking up over 15 million views and the trend now spreading to cricket and US sports events.

Essential AI Guides - Reading List:

Let us know!

What did you think of today's email?Before you go, please give your feedback to help us improve the content for you! |

Work with us

Reach 100k+ engaged Tech Professionals, Engineers, Managers and decision makers. Join brands like MorningBrew, HubSpot, Prezi, Nike, Ahref, Roku, 1440, Superhuman, and others in showcasing your product to our audience. Get in touch now →